A Policy That Allows Cloning A CodeCommit Repository and Pushing It To An S3 Bucket

ACM.347 Creating a generic Lambda policy template that allows passing in actions and resources

~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~

⚙️ Check out my series on Automating Cybersecurity Metrics | Code.

🔒 Related Stories: AWS Security | Network Security | S3

💻 Free Content on Jobs in Cybersecurity | ✉️ Sign up for the Email List

~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~

In the last post I had to revisit my generic Lambda deployment since I couldn’t get my initial plan to assume a role with MFA to work.

An AWS Policy for to move files from CodeCommit to S3

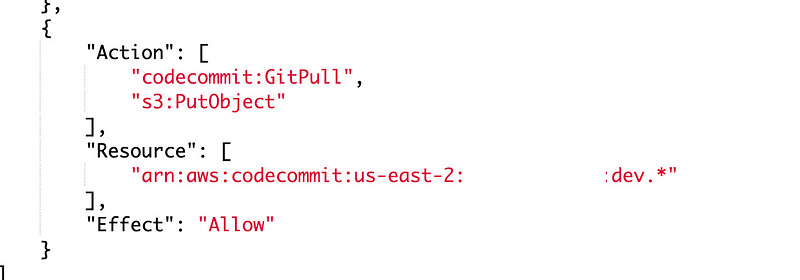

In this post I need to create the Lambda specific policy that will allow the Lambda function to do the following:

- Clone an AWS CodeCommit repository.

- Push the files to an AWS S3 bucket.

I want the policy to be generic for any repository and bucket combination. I can redeploy the same template but pass in the specific web site repository and bucket for a particular website. For my testing purposes I’m using this website and I already created both a code commit repository and s3 bucket with this name:

dev.rainierrhododendrons.com

What are the AWS actions I need in my policy?

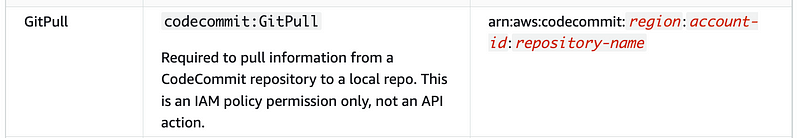

To clone the repository we will need this permission:

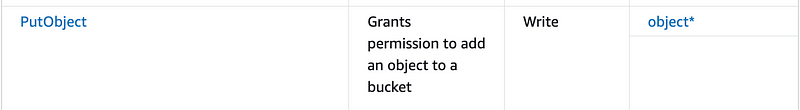

Then the Lambda function needs to be able to copy the code to S3.

The AWS S3 action is called PutObject. That’s because the things you store in AWS are technically objects not files, but for our purposes it’s fine to think of them as files. No reason to be overly technical.

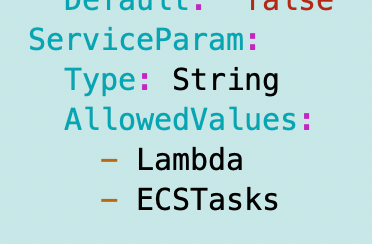

I’ve already got the AppPolicy.yaml template that grants network permissions required for Lambda, but we need to only add those permissions if it’s a Lambda function.

The service is passed in as a parameter:

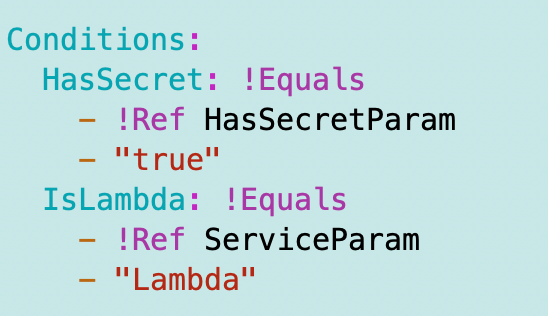

Add an IsLambda condition:

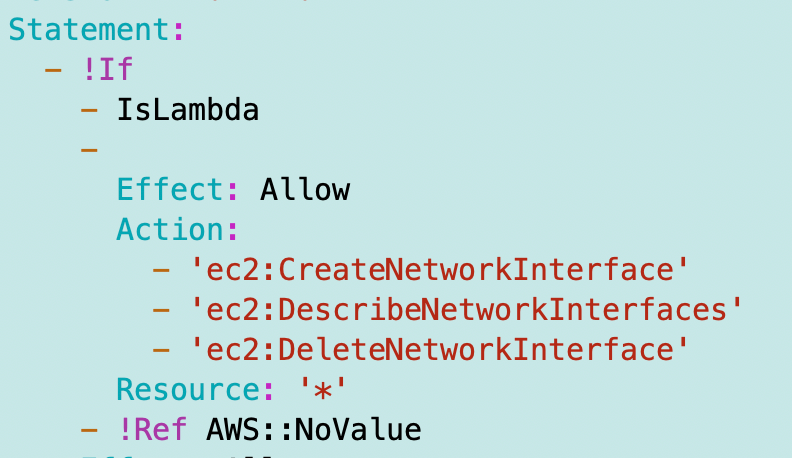

Only allow the networking permissions if it’s a Lambda function:

I redeploy that policy to make sure it still works.

Networking Restrictions in SCP don’t work with CloudFormation

I’m getting blocked by my SCP again which restricts traffic for Lambda to the VPC endpoint. When I check VPC FlowLogs for the VPC endpoint I don’t see any traffic related to the GetFunction API calls. So at this point I stopped to figure out what was going on.

Here’s a post on the problem. If you limit actions to specific IP addresses, AWS CloudFormation uses your user as the principal but CloudFormation’s IP address. There seems to be no way to create a viable SCP to solve this at the time of this writing. But I’m going to keep thinking on that.

One idea would be to completely separate automation accounts from human accounts as I have done in the past and closely monitor for unauthorized activity. The other is to open up the activity by temporarily removing the SCP while actively deploying things — which is not ideal. Maybe I’ll think of a way to write an SCP for this later but that’s not the problem I’m trying to solve in this post.

Lambda specific permissions

Now I need to add the extra permissions. There are a myriad of ways I can do this but I’m going to pass in a list of actions and a list of resources to create a policy. Let’s see how that works out. I got that idea from looking at the way the AWS console creates a policy. It’s much harder to read but simpler to create a policy that way.

The main problem with this approach is that we cannot apply restrictions in conditions that are only applicable to a specific service. But for now this should work.

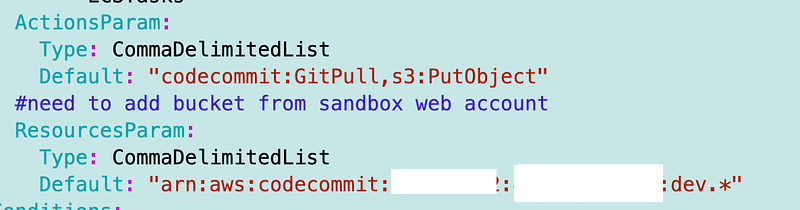

Here’s what I’m going to add as a test, using defaults for the moment. I’ll move the defaults to my script after testing that this works.

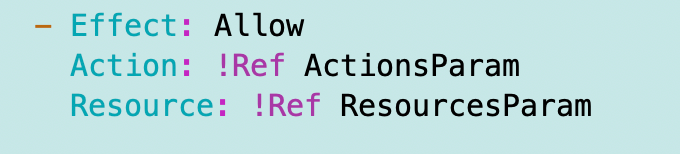

Then I create a policy statement like this:

I use my Lambda deploy script which deploys my app role to test the policy change — and it thankfully works on first try.

We may need some additional permissions upon testing. If we do I’ll link to that here.

Now you may have noticed I didn’t add the bucket resource. In a prior post I created my website bucket in an account dedicated for that purpose. That way I can create a very strict SCP to limit the chance that my websites are updated inappropriately.

I am going to add the ability to update a bucket in another account in the next post because we need to allow access for the cross-account role in the S3 bucket policy as well.

Follow for updates.

Teri Radichel | © 2nd Sight Lab 2023

About Teri Radichel:

~~~~~~~~~~~~~~~~~~~~

⭐️ Author: Cybersecurity Books

⭐️ Presentations: Presentations by Teri Radichel

⭐️ Recognition: SANS Award, AWS Security Hero, IANS Faculty

⭐️ Certifications: SANS ~ GSE 240

⭐️ Education: BA Business, Master of Software Engineering, Master of Infosec

⭐️ Company: Penetration Tests, Assessments, Phone Consulting ~ 2nd Sight LabNeed Help With Cybersecurity, Cloud, or Application Security?

~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~

🔒 Request a penetration test or security assessment

🔒 Schedule a consulting call

🔒 Cybersecurity Speaker for PresentationFollow for more stories like this:

~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~

❤️ Sign Up my Medium Email List

❤️ Twitter: @teriradichel

❤️ LinkedIn: https://www.linkedin.com/in/teriradichel

❤️ Mastodon: @teriradichel@infosec.exchange

❤️ Facebook: 2nd Sight Lab

❤️ YouTube: @2ndsightlab