The Corporate Governance Failure at the Heart of Sam Altman’s Ouster from OpenAI

山姆-奥特曼下台的核心原因--公司治理失误,来自 OpenAI

由必应图像创建器渲染

In this piece I examine both the flawed corporate structure of OpenAI, and the neglect of governance evolution during a year of immense change. Those things led to the chaos that is still unfolding around the firing of Sam Altman. I conclude that alternative governance structures aren’t the issue, as some may readily surmise. Nonetheless, how OpenAI’s particular structure was set up and managed left it vulnerable to calamity. Other alternative structures, such as those instituted by Anthropic, were more thoughtfully put together, better harmonize the interests of multiple stakeholders, and should be more resilient to slow divergences of goals or sudden organizational shocks.

在这篇文章中,我探讨了 OpenAI 公司结构的缺陷,以及在发生巨大变化的一年中对治理演进的忽视。这些问题导致了萨姆-阿尔特曼(Sam Altman)被解雇事件的混乱局面。我的结论是,替代性治理结构并不是问题所在,有些人可能很容易就会这样猜测。然而,OpenAI 的特殊结构是如何建立和管理的,这让它很容易遭受灾难。其他替代性结构,如 Anthropic 建立的结构,则是经过更深思熟虑的组合,能更好地协调多方利益相关者的利益,在面对缓慢的目标分歧或突如其来的组织冲击时,应该更有弹性。

On Friday, November 17, 2023, CEO and co-founder Sam Altman was unceremoniously fired from OpenAI by four members of the company’s non-profit board. As of Monday, November 20, 2023, three days after he was fired, it seems Sam Altman will be joining Microsoft as CEO of a new AI division that he will help create. Mr. Altman will be joined at Microsoft by former OpenAI board chair and COO Greg Brockman, who quit last Friday within hours of Mr. Altman’s ouster and his own demotion from the board. Other senior employees have since resigned, are intent on joining the new Microsoft division, and many more likely will do so. Reportedly, at least 650 of OpenAI’s 770 employees had signed a petition demanding that the company take Mr. Altman back and get rid of its Board. In addition to employee shock and outrage, OpenAI’s investors were very upset about Mr. Altman’s sudden firing, without advance notice to any of them and seemingly without substantive provocation. The company was on the verge of another financing round that would have entailed a secondary sale of employee shares, valuing OpenAI at $87 Billion dollars, triple its current valuation. That financing appears in jeopardy (certainly the valuation can’t stand with the amount of destabilization introduced to the company), which harms the interests of both employees and previous investors.

2023 年 11 月 17 日星期五,OpenAI 首席执行官兼联合创始人山姆-奥特曼(Sam Altman)被该公司非盈利董事会的四名成员无情解雇。截至 2023 年 11 月 20 日星期一,也就是他被解雇三天后,山姆-奥特曼似乎将加入微软,担任由他帮助创建的新人工智能部门的首席执行官。前OpenAI董事会主席兼首席运营官格雷格-布罗克曼(Greg Brockman)将加入阿尔特曼先生的团队。此后,其他高级员工也纷纷辞职,打算加入微软的新部门。据报道,在OpenAI的770名员工中,至少有650人签署了一份请愿书,要求公司收回阿尔特曼先生,并撤消董事会。除了员工感到震惊和愤怒之外,OpenAI 的投资者也对 Altman 先生突然被解雇感到非常不满,因为他没有事先通知任何投资者,似乎也没有受到任何实质性的挑衅。当时,公司即将进行新一轮融资,需要对员工股份进行二次出售,OpenAI 的估值将达到 870 亿美元,是目前估值的三倍。这笔融资似乎岌岌可危(当然,如果公司受到如此大的动荡,估值也将难以为继),这既损害了员工的利益,也损害了之前投资者的利益。

Despite scrambling over the weekend to repair the damage done by his firing and bring Mr. Altman back, the board instead preserved their own roles (for the time being) and quickly demoted interim CEO Mira Murati (OpenAI’s CTO) — designated as interim just Friday — in favor of Emmett Shear, former CEO of Twitch. In the wee hours of Monday morning, Ilya Sutskever, co-founder and Chief Scientist of OpenAI and one of the board members who orchestrated Mr. Altman’s ouster, expressed remorse over what has transpired: “I deeply regret my participation in the board’s actions. I never intended to harm OpenAI.” The fate of OpenAI hangs in the balance. The company has been harmed, deeply, and it remains to be seen how it will recover. Regardless of what prompted the board to see fit to remove Mr. Altman in the first instance, certainly in no one’s perfect world would the chaos and uncertainty brought about by both the ouster and the manner in which it was undertaken have been a goal. This is all a grand fiasco. Of the many things to be said (and still to be discovered) about the Shakesperian drama unfolding before our eyes, it is safe to say now that it is an unmitigated failure of corporate stewardship.

尽管董事会在周末争先恐后地想要弥补因解雇阿尔特曼先生而造成的损失并让他复出,但董事会却保留了他们自己的角色(暂时),并迅速降级了临时首席执行官米拉-穆拉提(OpenAI 首席技术官)(周五才被指定为临时首席执行官),转而启用了 Twitch 前首席执行官埃米特-希尔(Emmett Shear)。周一凌晨,OpenAI 联合创始人兼首席科学家、策划奥特曼先生下台的董事会成员之一伊利亚-苏茨克沃(Ilya Sutskever)对所发生的一切表示悔恨:"我对参与董事会的行为深表遗憾。我从未想过要伤害 OpenAI。OpenAI 的命运岌岌可危。该公司已经深受其害,如何恢复仍有待观察。不管是什么原因促使董事会在第一时间解除 Altman 先生的职务,在任何人的完美世界里,下台和下台方式所带来的混乱和不确定性都不会是目标。这一切都是一场大惨败。关于在我们眼前上演的这出莎士比亚戏剧,有许多事情可以说(而且仍有待发现),现在可以肯定地说,这是企业管理的一次彻底失败。

What transpired with OpenAI represents a huge and unmistakable failure of corporate governance. This is the case whether Altman returned after his firing or not (who knows, he still might). How he was terminated in the first instance, and the chaos that followed, provide all the evidence of failure that we need. Through the lens of governance, we can get an interesting and perhaps the most probative view on what went wrong at OpenAI. The data points from the last few days are susceptible to multiple readings. Even those who agree there was a failure of governance may ascribe different reasons for it. Some commentators will be happy that the will of the board prevailed; they will see that as a win for board governance (see, e.g., here, arguing that Altman’s quick return to OpenAI based on employee and investor pressure after his firing would have been a failure). Others may blame the alternative governance structure of the company and contend that anything too creative or exotic that leaves key shareholders out of oversight and decision making is flawed.

OpenAI 所发生的一切代表着公司治理的巨大而明确的失败。无论 Altman 在被解雇后是否回归(谁知道呢,他还是有可能回归),情况都是如此。他是如何在第一时间被解雇的,以及随后发生的混乱,都为我们提供了所需的失败证据。透过治理的视角,我们可以获得一个有趣的、或许也是最能证明 OpenAI 出了什么问题的视角。过去几天的数据点很容易被多重解读。即使是那些同意存在治理失败的人,也可能会提出不同的原因。一些评论家会为董事会的意愿占了上风而感到高兴;他们会认为这是董事会治理的胜利(例如,参见此处,认为 Altman 在被解雇后迫于员工和投资者的压力迅速重返 OpenAI 的做法是失败的)。其他人可能会指责公司的另类治理结构,并认为任何过于创新或奇特的、将主要股东排除在监督和决策之外的做法都是有缺陷的。

Basic Corporate Governance

基本公司治理

For profit companies typically have boards of directors. Venture-backed start-up boards typically include one or two company executives, as well as representatives from the VC firms who contributed the most capital. It is healthy to have other outside, or independent, directors, who can bring fresh perspectives on governance and management matters without being overly swayed by self-interest. Corporate board members have fiduciary obligations to the company, typically to promote positive business performance, ensure legal and regulatory compliance and maximize shareholder returns.

营利性公司一般都有董事会。风险投资支持的初创公司董事会通常包括一到两名公司高管,以及出资最多的风险投资公司的代表。有其他外部董事或独立董事是健康的,他们可以为公司治理和管理事务带来新的视角,而不会过分受自身利益的左右。公司董事会成员对公司负有信托义务,通常是促进积极的经营业绩、确保遵守法律法规以及最大限度地提高股东回报。

Nonprofit company boards operate in much the same way. There are independent board directors, perhaps a representative from one or more of the biggest philanthropic backers of the organization and some executive staff. The big difference is that nonprofits don’t exist to maximize shareholder value or profit. They typically are formed with a charitable or social benefit goal as their primary mission.

非营利公司董事会的运作方式大致相同。董事会有独立董事,或许还有一名来自该组织最大慈善支持者的代表和一些行政人员。最大的区别在于,非营利组织并不是为了实现股东价值或利润最大化而存在的。它们通常以慈善或社会福利目标为主要使命。

Neither for profit or nonprofit boards have specific fiduciary obligations to their organization’s employees or outside stakeholders (such as customers or business partners) other than generally maximizing business performance. Most healthy organizations take care to attend to diverse stakeholder interests, with the knowledge that doing so and acting beyond what fiduciary duties or legal requirements may require tends to support achievement of business performance, whether in maximizing shareholder value or fulfilling a charitable or social benefit goal.

无论是营利性组织还是非营利性组织的董事会,除了一般意义上的最大化经营业绩外,对组织员工或外部利益相关者(如客户或商业伙伴)都没有具体的信托义务。大多数健康的组织都会关注不同利益相关者的利益,因为他们知道,这样做以及超出信托责任或法律要求的行为,往往有助于实现企业绩效,无论是最大化股东价值,还是实现慈善或社会福利目标。

Corporate Governance at OpenAI

OpenAI 的公司治理

OpenAI was founded as a nonprofit in late 2015. The non-profit form was chosen “with the goal of building safe and beneficial artificial general intelligence for the benefit of humanity.” As framed by the company, OpenAI “believed a 501(c)(3) would be the most effective vehicle to direct the development of safe and broadly beneficial AGI while remaining unencumbered by profit incentives.” However, after a few years in operation, the company realized that the capital needed to fuel its ambitions would not be obtainable via a nonprofit entity only. As OpenAI explains,

OpenAI 成立于 2015 年底,是一家非营利组织。选择这种非营利形式的目的是 "为了造福人类,建立安全、有益的人工通用智能"。按照公司的说法,OpenAI "相信501(c)(3)将是指导安全和广泛有益的人工智能发展的最有效工具,同时不受利润激励的束缚"。然而,在运营几年后,公司意识到仅靠非营利实体无法获得实现其雄心壮志所需的资金。正如 OpenAI 所解释的那样、

“It became increasingly clear that donations alone would not scale with the cost of computational power and talent required to push core research forward, jeopardizing our mission. So we devised a structure to preserve our Nonprofit’s core mission, governance, and oversight while enabling us to raise the capital for our mission”

"我们越来越清楚地认识到,仅靠捐赠无法满足推动核心研究所需的计算能力和人才成本,从而危及我们的使命。因此,我们设计了一种结构,以维护我们非营利组织的核心使命、治理和监督,同时使我们能够为我们的使命筹集资金"。

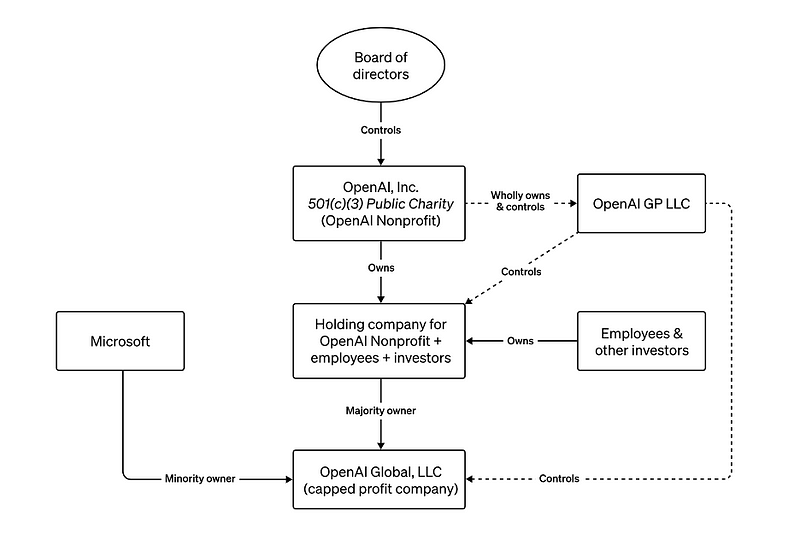

In anticipation of receiving a $1 Billion initial investment from Microsoft in 2019, OpenAI under Mr. Altman’s stewardship created for-profit subsidiaries under the original nonprofit. These for-profit entities would legally be able to accept outside investment and provide equity interests to OpenAI employees. The key for-profit subsidiary included a “capped profit” mechanism, whereby investors could only hope to achieve up to a 100x return on their investments and all excess above that would be returned to the nonprofit for use towards its broader mission.

由于预计将在 2019 年从微软获得 10 亿美元的初始投资,OpenAI 在 Altman 先生的管理下,在原来的非营利机构下创建了营利性子公司。这些营利实体在法律上可以接受外部投资,并为 OpenAI 员工提供股权。主要的营利性子公司包括一个 "利润封顶 "机制,即投资者只能希望获得最高 100 倍的投资回报,超出部分将全部返还给非营利机构,用于实现其更广泛的使命。

OpenAI 的总体公司结构,由唯一的董事会控制非营利实体,该实体位于所有其他附属机构之上,并控制所有其他附属机构。山姆-阿尔特曼下台前的董事会由三名 OpenAI 高管(格雷格-布罗克曼(主席)、山姆-阿尔特曼和伊利亚-苏茨克沃)和三名独立董事(Quora 首席执行官亚当-德安杰洛、GeoSim 系统首席执行官塔莎-麦考利和乔治城大学安全与新兴技术中心战略与基础研究补助金主任海伦-托纳)组成。

This approach was not without its controversy. It prompted senior defections from the company, leading, for instance, to the founding of Anthropic in 2021 by Dario and Daniela Amodei. Apparently, some tensions remained from the decisions to accept outside funding, which intensified in 2023 once ChatGPT became a breakout success, with Microsoft and other investors pouring many Billions into the company. Worth noting is that Ilya Sutskever did acknowledge and seemed sanguine about the new structure in 2019 when it was created. He stated at that time “In order to build AGI you need to have billions of dollars of investment … We created this structure in order to raise this capital while staying aligned with our mission.”

这种做法并非没有争议。它导致了公司高层的叛逃,比如达里奥和丹妮拉-阿莫代在 2021 年成立了 Anthropic 公司。2023 年,ChatGPT 一炮而红,微软和其他投资者向该公司投入了数十亿美元。值得注意的是,伊利亚-苏茨克沃尔(Ilya Sutskever)在 2019 年新架构创建时确实承认了这一点,而且似乎对其持乐观态度。他当时表示:"为了建立 AGI,你需要有数十亿美元的投资......我们创建这个结构是为了筹集这笔资金,同时与我们的使命保持一致。"

Governance & Judgment Failures

管理与判断失误

As outlined, OpenAI’s governance structure is a retrofit. The failure of governance that it experienced in the lead up to November 17 is in part due to that structure. But in larger part it is due to how the structure was neglected while the company quickly changed.

如前所述,OpenAI 的治理结构是一种改造。它在 11 月 17 日之前所经历的治理失败,部分原因就在于这种结构。但更大的原因是,在公司迅速变革的同时,这一结构却被忽视了。

→ Letting the nonprofit board membership languish following defections in early 2023. In addition to the six members that it had immediately before Mr. Altman’s firing and Mr. Brockman’s demotion on November 17, 2023, the OpenAI Board previously counted as members Reid Hoffman, an experienced Silicon Valley company operator and investor, Will Hurd, a former Texas Congressman, and Shivon Zilis, a director of Elon Musk’s Neuralink. These three stepped down as board members in the Spring of 2023 owing to various conflicts. Inadequate effort was made to replace them or to supplement the board with additional expertise and experience, as might befit a company suddenly worth tens of billions of dollars overnight. Mr. Altman may have had a lot of trust in the three outside directors, and certainly in Mr. Brockman. But trust is sometimes inadequate to preserve prudent, transparent decision making and good judgment, especially. It is entirely unclear whether Adam D’Angelo, Helen Toner, Tasha McCauley and Ilya Sutskever had the depth and breadth of experience to guide OpenAI against its nonprofit mission, muchless that balanced with a broader set of stakeholder interests in a multibillion dollar, globally prominent enterprise. It’s clear they exercised poor judgment in how they went about terminating Mr. Altman. Everyone would have been better served by a bigger board, with more maturity and diverse expertise on matters concerning AGI and its prospective social impact as well as harmonizing these interests with those of investors and other stakeholders.

→ 让非营利性董事会成员在 2023 年初的叛变后奄奄一息。除了在 2023 年 11 月 17 日奥特曼先生被解雇和布罗克曼先生被降职之前的六名成员之外,OpenAI 董事会之前的成员还包括经验丰富的硅谷公司经营者和投资者里德-霍夫曼(Reid Hoffman)、前德克萨斯州众议员威尔-赫德(Will Hurd)和埃隆-马斯克的 Neuralink 公司董事希文-齐利斯(Shivon Zilis)。由于各种冲突,这三人于 2023 年春辞去了董事会成员职务。公司没有做出足够的努力来接替他们,也没有为董事会补充更多的专业知识和经验,而这可能与一家一夜之间突然价值数百亿美元的公司相匹配。奥特曼先生可能非常信任三位外部董事,当然也非常信任布罗克曼先生。但信任有时并不足以维护谨慎、透明的决策和良好的判断力,尤其如此。我们完全不清楚亚当-德安杰洛(Adam D'Angelo)、海伦-托纳(Helen Toner)、塔莎-麦考利(Tasha McCauley)和伊利亚-苏茨克沃(Ilya Sutskever)是否拥有丰富的经验和广度来指导 OpenAI 履行其非营利性使命,更不清楚他们是否能够平衡数十亿美元的全球知名企业中更广泛的利益相关者的利益。很明显,他们在解雇 Altman 先生的过程中做出了错误的判断。如果有一个更大的董事会,在有关 AGI 及其未来社会影响的问题上拥有更成熟、更多样化的专业知识,并将这些利益与投资者和其他利益相关者的利益协调起来,对每个人都会更好。

→ Inadequate review processes for the CEO. As of this writing, I do not have access to OpenAI, Inc.’s bylaws or other governing documents. However, we know that the decision to fire Mr. Altman arose from a majority vote (four of the six directors). CEO’s serve at the pleasure of their boards and can be removed based on a majority decision. However, disagreements over strategic direction of a company typically should not involve termination of an otherwise high performing CEO without malfeasance or a persisting impasse deemed detrimental to the business. Prudent practice typically involves investigation, documentation and articulation of objective evidence of deficits or misdeeds. The OpenAI board’s stated reason for terminating Mr. Altman is the vague characterization that he was not “consistently candid” with the Board, “hindering its ability to exercise its responsibilities.” However, subsequent revelations make clear that he was not engaged in any malfeasance and there was “no specific disagreement about safety.” Without knowing more, the decision to oust Mr. Altman seems based on personality conflicts or personal disagreements that plausibly should or could have been subject to dispute resolution procedures or consultation with other interested stakeholders. Governing documents don’t always provide for such procedures, but good governance often involves following such procedures voluntarily where wrongdoing or underperformance is not otherwise presented by a CEO. The nonprofit board had no seats for investors or even an advisory committee of investors. On the one hand, this may have avoided any corrupting influences. On the other, it deprived the board of the wisdom that “smart money” can often bring to the table, particularly in situations involving difficult strategic decisions or disagreements having material impact on the company.

→ 对首席执行官的审查程序不完善。截至本文撰写之时,我无法查阅 OpenAI 公司的章程或其他管理文件。不过,我们知道解雇 Altman 先生的决定是由多数票(六名董事中的四名)做出的。首席执行官由董事会任命,可以根据多数决定将其免职。但是,如果没有渎职行为或被认为对企业不利的持续僵局,对公司战略方向的意见分歧通常不应该涉及解雇一位表现出色的首席执行官。审慎的做法通常包括调查、记录和阐明赤字或不当行为的客观证据。OpenAI 董事会解雇 Altman 先生的理由含糊其辞,称他没有对董事会 "始终保持坦诚","妨碍了董事会履行职责的能力"。然而,随后披露的信息清楚地表明,他没有任何渎职行为,而且 "在安全问题上没有具体的分歧"。在不了解更多情况的情况下,解雇 Altman 先生的决定似乎是基于个性冲突或个人意见分歧,而这些冲突或意见分歧本应或可以通过争议解决程序或与其他利益相关者协商来解决。管理文件并不总能规定这样的程序,但良好的治理通常涉及在首席执行官没有提出不当行为或业绩不佳的情况下,自愿遵循这样的程序。非营利组织董事会没有投资者席位,甚至没有投资者咨询委员会。一方面,这可以避免任何腐败影响。另一方面,这也使董事会失去了 "聪明的钱 "往往能带来的智慧,特别是在涉及困难的战略决策或对公司有重大影响的分歧的情况下。

→ The board overstepped an overly narrow mission focus. The mission of OpenAI is “to ensure that artificial general intelligence benefits all of humanity.” In that regard, safety for AGI is listed as a paramount objective. AGI, of course, remains an aspirational goal for OpenAI. Per Mr. Altman in early November, the company’s current LLM products are not the way to get to AGI or superintelligence. As such, the commercial success of products like ChatGPT and GPT-4 do not implicate AGI or AGI-related safety. There are plenty of immediate policy and user safety issues to be concerned about based on the AI products currently available to the public. But these are not of the existential risk or more severe public safety risk variety associated with AGI or Superintelligent AI. Nor was there a promise to permit Microsoft or others access to potentially dangerous technology. For instance, since at least June 2023, the company had made clear that:

→ 董事会超越了过于狭窄的使命重点。OpenAI 的使命是 "确保人工通用智能造福全人类"。在这方面,AGI 的安全性被列为首要目标。当然,AGI 仍然是 OpenAI 的理想目标。按照 Altman 先生 11 月初的说法,公司目前的 LLM 产品并不是实现 AGI 或超级智能的途径。因此,ChatGPT 和 GPT-4 等产品在商业上的成功并不涉及 AGI 或 AGI 相关的安全问题。基于目前可供公众使用的人工智能产品,有很多直接的政策和用户安全问题值得关注。但这些问题并不属于与 AGI 或超级智能 AI 相关的生存风险或更严重的公共安全风险。也没有承诺允许微软或其他公司获得潜在的危险技术。例如,至少从 2023 年 6 月起,该公司就明确表示:

“While our partnership with Microsoft includes a multibillion dollar investment, OpenAI remains an entirely independent company governed by the OpenAI Nonprofit. Microsoft has no board seat and no control. And … AGI is explicitly carved out of all commercial and IP licensing agreements.”

"虽然我们与微软的合作包括数十亿美元的投资,但 OpenAI 仍然是一家完全独立的公司,由 OpenAI 非营利组织管理。微软没有董事会席位,也没有控制权。而且......AGI 被明确排除在所有商业和知识产权许可协议之外"。

Whatever concerns the OpenAI board may have held about the commercial direction of the company under Mr. Altman’s leadership, these did not immediately implicate their mandate under the stated mission of the non-profit. Moreover, Mr. Sutskever himself had assumed control over AGI and Superintelligence research at OpenAI, particularly the goal of developing and managing those hoped-for achievements safely.

无论 OpenAI 董事会对奥特曼先生领导下的公司的商业方向有什么担忧,这些担忧并没有立即牵涉到他们在非营利组织既定使命下的任务。此外,Sutskever 先生本人已经接管了 OpenAI 的 AGI 和超级智能研究,尤其是安全开发和管理这些预期成果的目标。

The overall point here is that once OpenAI changed overnight and brought the world along with it, it should have been abundantly clear that more was needed to harmonize its various motives and interests. The business of the company was burgeoning around Generative AI, and that, in and of itself, was not the mission focus of the nonprofit running the company. Either the nonprofit’s mission needed to be expanded, or more decision making and leadership over its broader pursuits needed to be devolved to subsidiary entities operating its Generative AI business.

总而言之,一旦 OpenAI 在一夜之间发生变化,并带动了整个世界,我们就应该清楚地认识到,需要做更多的工作来协调其各种动机和利益。该公司的业务围绕着 "生成式人工智能"(Generative AI)蓬勃发展,而这本身并不是运营该公司的非营利组织的使命重点。要么需要扩大非营利组织的使命,要么需要将更多的决策权和对更广泛追求的领导权下放给经营生成式人工智能业务的附属实体。

→ Failing to Anticipate Obvious Ramifications. The ultimate governance failure was the failure by the Board to anticipate the extreme disruption to OpenAI that their actions would introduce. These may all be a function of a more basic lack of leadership savvy, experience and expertise. However, that there would be the kind of fallout that there has been since Friday should have been expected. Since the public launch of ChatGPT one year ago, Sam Altman has become a leading figure in the global AI movement. He has functioned as a credible, articulate ambassador for both the amazing potential of AI as well as its risks. Moreover, he has been a capable steward of financing and product development at the company, beloved by investors, employees and silicon valley leadership more broadly. For many, Sam Altman is not only the face and voice of OpenAI, but he IS the company. In such situations, it is incumbent on a board to lay sufficient groundwork for an ouster. Having a coherent succession plan, a sophisticated messaging plan and connecting confidentially with key stakeholders in advance would all be critical. None of these measures appeared to have been undertaken at all, or else with sufficient forethought and planning. It is as yet unclear whether the board had sound counsel, not only about the technical viability of terminating Mr. Altman, but about the propriety of doing so under the circumstances in which the termination unfolded.

→ 未能预见明显的后果。最终的治理失败是董事会未能预见到他们的行动会对 OpenAI 造成极大的破坏。这些可能都是由于缺乏基本的领导智慧、经验和专业知识造成的。然而,自上周五以来所产生的后果,本应在意料之中。自一年前公开发布 ChatGPT 以来,萨姆-奥特曼已成为全球人工智能运动的领军人物。他既是人工智能惊人潜力的可靠代言人,也是其风险的明确代言人。此外,他还是公司融资和产品开发的得力管家,深受投资者、员工和硅谷领导层的喜爱。对许多人来说,萨姆-奥特曼不仅是 OpenAI 的代言人和代言人,而且他就是这家公司。在这种情况下,董事会有责任为下台奠定充分的基础。制定连贯的继任计划、复杂的信息传递计划以及提前与关键利益相关者进行秘密沟通都至关重要。这些措施似乎都没有采取,或者说没有经过充分的深思熟虑和规划。目前尚不清楚董事会是否有合理的建议,不仅是关于解雇 Altman 先生的技术可行性,而且是关于在当时的情况下这样做是否恰当。

Alternative Structures with Aligned Incentives

激励措施一致的替代结构

The commercial success of ChatGPT, a public beta research project, took everyone off guard. It created a commercial opportunity zone no one expected and no one had planned for. No one could have, because the runaway success of ChatGPT and its industry transforming impact had no precedent in technology history. The nonprofit needed to evolve, but, instead, lost Board members to conflicts and failed to replace or supplement them with additional experience or skill sets appropriate to what OpenAI was becoming in 2023. Because it did not, trust broke down, unsound judgment was exercised by the remaining board majority and chaos ensued. Mr. Altman does share in the responsibility for not attending to the very governance deficiencies that arose to undermine him, though, perhaps, his trusting nature is generally a virtue. Even the well-intended can be overcome by structures that don’t adequately align incentives, contain proper safeguards or sufficiently define decision making procedures. Many may be tempted to impugn all alternative structures as a result. I do not believe this is warranted.

ChatGPT 是一个公开测试研究项目,它在商业上的成功让所有人都措手不及。它创造了一个无人预料、无人计划的商业机遇区。没有人能够预料到,因为 ChatGPT 的一飞冲天及其对行业变革的影响在技术史上是绝无仅有的。非营利组织需要发展,但却因冲突失去了董事会成员,也未能用更多的经验或技能组合来替代或补充他们,以适应 OpenAI 在 2023 年的发展。由于没有这样做,信任破裂了,剩下的大多数董事会成员做出了不正确的判断,混乱随之而来。奥特曼先生也有责任,因为他没有注意到管理上的缺陷,而这些缺陷恰恰损害了他的利益,不过,也许他的信任天性通常是一种美德。即使是用心良苦的人,也会被那些没有充分调整激励机制、没有适当的保障措施或没有充分界定决策程序的结构所克服。许多人可能会因此而指责所有的替代结构。我认为这是没有必要的。

A non-profit was not the best vehicle for OpenAI’s ambitions in 2015, and certainly not in 2019, when it first revisited its corporate structure. Since 2013, the state of Delaware created public benefit corporations (PBCs). This corporate form embraces that a company may have goals beyond profit maximization and have stakeholders it wishes to be accountable to beyond its shareholders. Importantly PBCs can embrace both the pursuit of profit and social benefit goals in a cohesive corporate structure. Its board can include investors, and decision making at the board level must take into account the interests of all identified stakeholders at all times.

在 2015 年,非营利组织并不是 OpenAI 雄心壮志的最佳载体,在 2019 年,当 OpenAI 首次重新审视其公司结构时,当然也不例外。自 2013 年起,特拉华州成立了公益公司(PBC)。这种公司形式认为,公司可能有超越利润最大化的目标,也有希望对股东以外的利益相关者负责。重要的是,公益公司可以在一个有凝聚力的公司结构中同时追求利润和社会效益目标。其董事会可以包括投资者,董事会一级的决策必须始终考虑到所有已确定的利益相关者的利益。

This is the form of corporation that Anthropic elected to create, following their founders’ disillusionment with OpenAI’s approach. In addition to a PBC, Anthropic also formed a long term benefit trust (LTBT). By design, the LTBT is a rotating body whose members serve year-long terms. The LTBT is “purpose” driven, and meant to hold the broader mission for socially beneficial uses of AI in mind within the scope of their authority and decision making. They can select the vast majority of the PBC’s board members, but do not themselves sit on the Anthropic board. As a novel corporate structure, the Anthropic LTBT + PBC builds in some design flexibility, acknowledging that some fine tuning may be desirable along the way. This kind of structure has yet to be tested, either with a crisis or litigation. However, based on its contours we can readily see how it would be more resilient to the kind of crisis faced by OpenAI. Not only might a termination event not been reached were OpenAI to have a structure more along these lines, but how that event was handled would likely have been done much more responsibly than what we have seen over the past several days.

这是 Anthropic 在其创始人对 OpenAI 的做法感到失望后选择创建的公司形式。除了 PBC,Anthropic 还成立了长期利益信托基金(LTBT)。根据设计,LTBT 是一个轮值机构,其成员的任期为一年。LTBT 以 "目的 "为导向,旨在在其权力和决策范围内,牢记人工智能的社会公益用途这一更广泛的使命。他们可以选择 PBC 董事会的绝大多数成员,但自己并不进入人类学董事会。作为一种新颖的公司结构,"人类学 LTBT + PBC "在设计上具有一定的灵活性,承认在发展过程中可能需要进行一些微调。这种结构还有待危机或诉讼的检验。不过,根据它的轮廓,我们很容易就能看出它在应对 OpenAI 所面临的危机时会有怎样的弹性。如果 OpenAI 采用了这种结构,不仅不会发生终止事件,而且在处理终止事件时也会比我们在过去几天中所看到的更加负责任。

Copyright © 2023 Duane R. Valz. Published here under a Creative Commons Attribution-NonCommercial 4.0 International License

版权 © 2023 Duane R. Valz.采用知识共享 署名-非商业性 4.0 国际许可协议在此发布

The author works in the field of machine learning/artificial intelligence. The views expressed herein are his own and do not reflect any positions or perspectives of current or former employers.

作者从事机器学习/人工智能领域的工作。本文所表达的观点仅代表作者本人,并不反映现任或前任雇主的任何立场或观点。

If you enjoyed this article, consider trying out the AI service I recommend. It provides the same performance and functions to ChatGPT Plus(GPT-4) but more cost-effective, at just $6/month (Special offer for $1/month). Click here to try ZAI.chat.

如果您喜欢这篇文章,不妨试试我推荐的人工智能服务。它提供与 ChatGPT Plus(GPT-4) 相同的性能和功能,但性价比更高,仅为 6 美元/月(特价为 1 美元/月)。 点击这里试用 ZAI.chat.