Migrating Code from S3 to a New GitHub Repository

ACM.232 Migrating a website in an S3 bucket to GitHub

~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~

⚙️ Part of my series on Automating Cybersecurity Metrics. The Code.

🔒 Related Stories: Application Security | GitHub Security | S3

💻 Free Content on Jobs in Cybersecurity | ✉️ Sign up for the Email List

~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~

In this post I’m going to create a new repository using the code I created in an earlier post and migrate files in an existing S3 bucket to that repository.

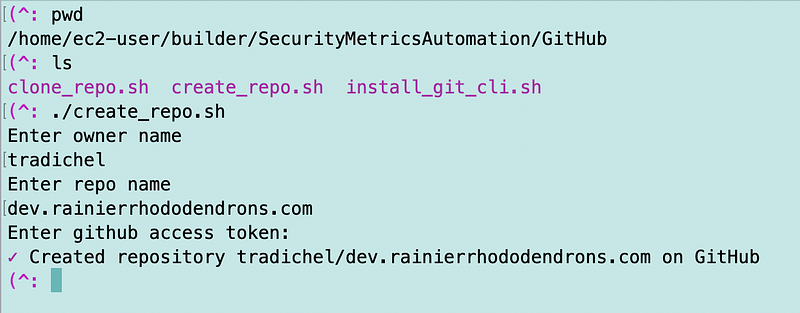

I added a folder named GitHub to my framework. Within that folder you’ll find the create_respository.sh script I wrote about earlier.

Following the steps and using the code from that prior post I create my new repo:

You can’t see the repository because the script creates private repositories.

Now to copy the files from the old location, I set up a developer access and secret key in that AWS account. I set up a profile to just work directly in that account since I don’t have much in it and shutting it down. All my new code will require MFA.

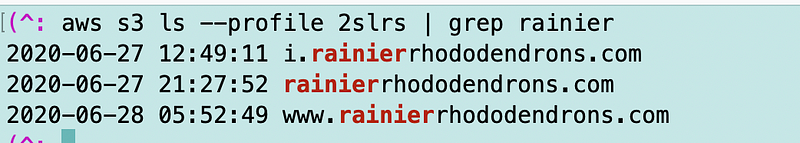

I set up an AWS CLI profile name 2slrs using those credentials.

aws configure --profile 2slrs

#follow the prompts to enter the credentials, region and output (I used json for output)I run the following command to find all he buckets with rainier in the name.

As you can see I have a bucket named i.rainierrhododendrons.com. That’s the bucket and related domain name for all my images. All my code for both dev and prod could point to the same images. Alternatively, I could set up a bucket for dev images and a bucket for prod images. If you have a highly lucrative site you might opt for the latter.

Currently all my code references i.rainierrhododendrons.com/ so I’m going to just leave that bucket and the related images where it is for now and only move the html pages.

I change into the repository directory created by the repository creation script.

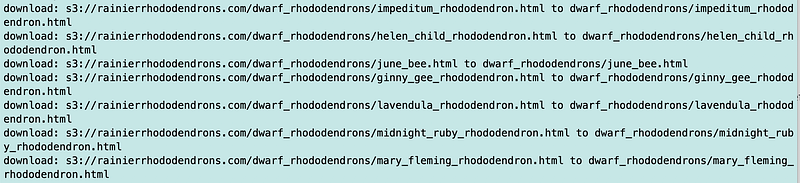

I run the sync command agains the existing website bucket.

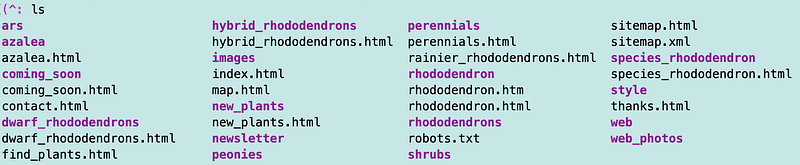

That worked:

The files are now in the repository directory.

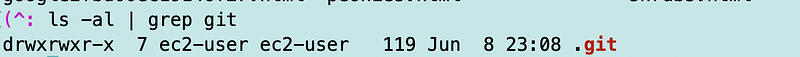

Now I can check them into the new repository. A GitHub repository has some files created by git in it which we can check for like this:

Because the directory has a period in front of it you won’t see it with ls unless you use the -al option. This directory has the git metadata files that tell git where to push the files.

Now recall in a prior post that I configured caching with get credential.helper.

That should still be configured but ot make sure I ran:

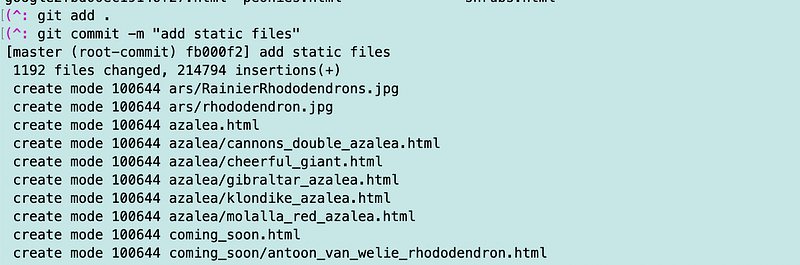

git config --global credential.helper 'cache --timeout 120'The first tow commands worked fine.

The last command gave me grief — same as before.

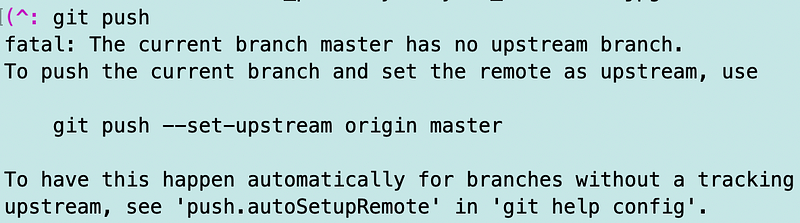

So let’s follow the instructions and see what happens. It says to run this command:

git push --set-upstream origin masterThat didn’t work. I could try to troubleshoot this but honestly, the easiest way I’ve found to fix this is to delete the repository and re-clone it.

cd .. #cd into your code directory containing the reopsitory folder

mv dev.rainierrhododendrons.com bak.dev.rr.com

git clone [repository url]

cd dev.rainierrhododendrons.com

#repeat the commands above

s3 sync...

git add .

git commit "add static files"

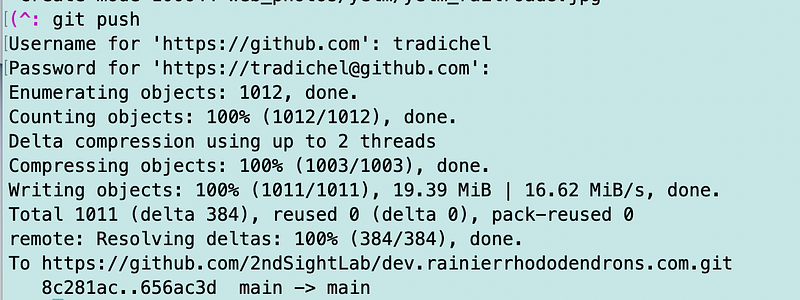

git pushThat works fine.

Now I have a GitHub repository with all my existing website files in it.

Follow for updates.

Teri Radichel | © 2nd Sight Lab 2023

About Teri Radichel:

~~~~~~~~~~~~~~~~~~~~

⭐️ Author: Cybersecurity Books

⭐️ Presentations: Presentations by Teri Radichel

⭐️ Recognition: SANS Award, AWS Security Hero, IANS Faculty

⭐️ Certifications: SANS ~ GSE 240

⭐️ Education: BA Business, Master of Software Engineering, Master of Infosec

⭐️ Company: Penetration Tests, Assessments, Phone Consulting ~ 2nd Sight LabNeed Help With Cybersecurity, Cloud, or Application Security?

~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~

🔒 Request a penetration test or security assessment

🔒 Schedule a consulting call

🔒 Cybersecurity Speaker for PresentationFollow for more stories like this:

~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~

❤️ Sign Up my Medium Email List

❤️ Twitter: @teriradichel

❤️ LinkedIn: https://www.linkedin.com/in/teriradichel

❤️ Mastodon: @teriradichel@infosec.exchange

❤️ Facebook: 2nd Sight Lab

❤️ YouTube: @2ndsightlab