Easily Understand Generative AI in 7 Minutes

Enhance Your Understanding and Stay Ahead

Generative AI has become a big deal lately. Remember, when ChatGPT came out in November 2022? Within no time, it became the fastest product ever to reach its first 100 million customers.

If you are here, certainly you are a user of ChatGPT, Bard, or Microsoft Co-Pilot, right? 🙂

AI has been around for a while, doing all sorts of stuff. But what made ChatGPT so special and attract attention was the ability to chat like a human and create content from simple prompts.

This created a wave of possibilities for businesses and got everyone’s attention. People started asking: What is this ‘new’ AI, or Generative AI, and how does it work?

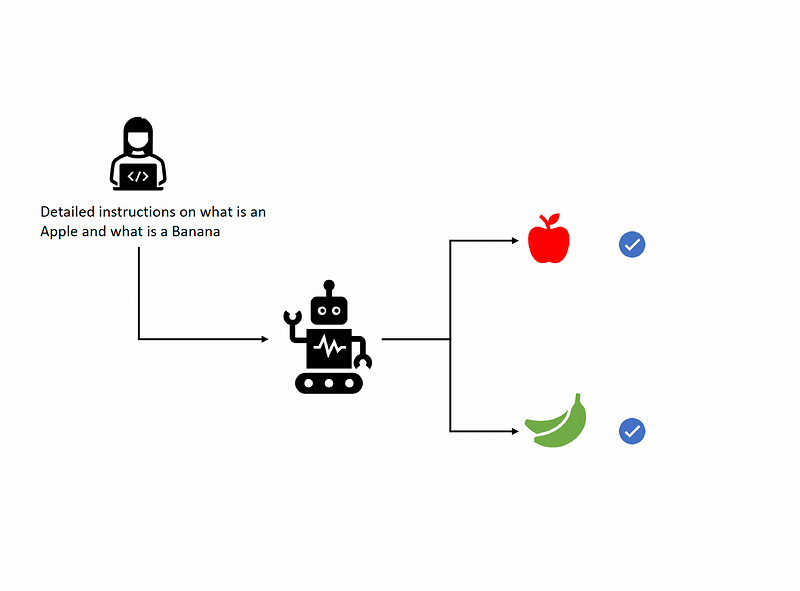

Before we dive into Generative AI, let’s talk about Artificial Intelligence (AI). AI is all about teaching machines to do things that humans do. For example, imagine a robot that sorts apples and bananas. You tell it what’s an apple and what’s a banana, and it does its job well.

How do you tell the machine? Well, you program explicit instructions as to what is an apple and what is a banana.

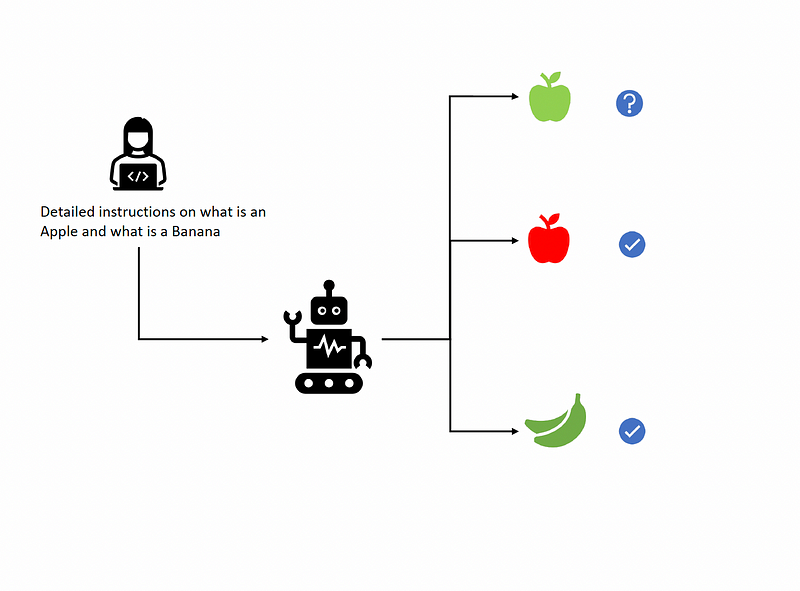

Now, imagine a scenario. The robot is doing a pretty cool job until it comes across a green-colored apple. The robot has only been programmed on red apples, and now it does not know what to do with green apples.

This is where Machine learning, or ML, comes in.

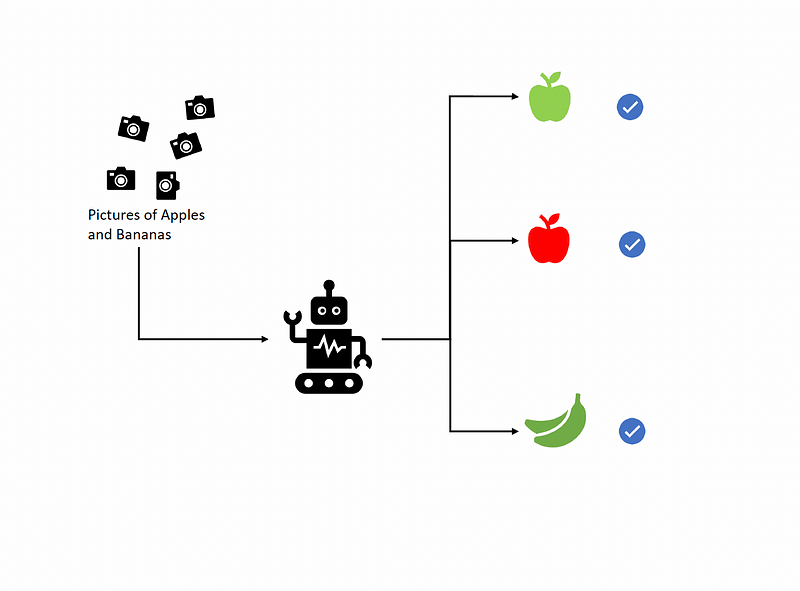

ML refers to the capability of machines to learn from data. In the fruit sorting robot case, the robot is shown many pictures of apples and bananas. The machine learns the patterns and shapes within the pictures to infer what an apple looks like and what a banana looks like.

When the robot comes across the green apple, even though it has not been shown any picture of a green apple,. It still knows that this is an Apple based on the other attributes it has learned about Apples.

So, you can see how cool and useful machine learning is, right? Wait till you learn about how cool is Generative AI is.

Generative AI takes things a step further. It doesn’t just recognize patterns; it creates new stuff based on what it knows. Imagine our apple and banana sorting robot creating a brand-new fruit that’s a mix of both!

Deep Learning is the secret sauce behind Generative AI. It’s like a super-smart brain that can understand tons of data and find patterns in it. The more data you give it, the smarter it gets.

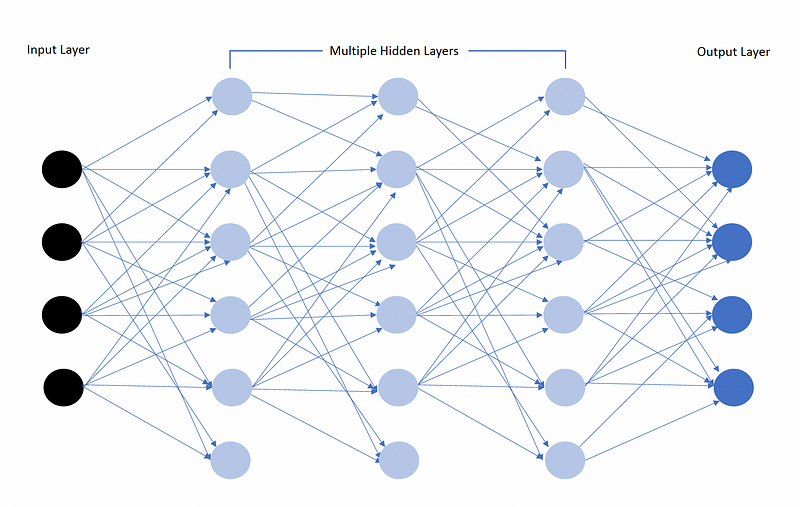

Inspired by the human brain (hence also called Artificial Neural Networks), Deep Learning models involve an input layer followed by multiple hidden layers leading into an Output layer. Complex computations can be performed within these hidden layers.

This led to the creation of Foundation Models like GPT-3 and BERT. These models are like super-flexible tools that can do lots of different tasks because they’ve been trained on a ton of data.

But how does ChatGPT chat like a human? That’s where Large Language Models (LLMs) come in. LLMs are a type of Foundation Model that has been trained on lots of text data and can predict what words come next in a sentence, making them great at writing and talking like humans.

You might be wondering how enormous the data is. It is very vast data, encompassing multiple domains and a variety of sources. For instance, GPT-3, one of the most popular models developed by OpenAI, was trained on hundreds of gigabytes of text. This includes text from books, websites, and other sources of structured and unstructured data.

Another example is Google’s BERT model, which was trained on the entirety of English Wikipedia (2,500 million words) along with 800 million words from a variety of web pages.

In the case of image recognition models like ResNet, they are trained on millions of images from datasets such as ImageNet, which has over 14 million hand-annotated images spanning 20,000 categories.

These examples highlight the scale of data that foundation models are trained on, enabling them to learn complex patterns and apply their knowledge across a wide range of tasks and scenarios.

Imagine the apple and banana sorting robot. Apart from feeding the robot pictures of apples and bananas, you also feed it tons and tons of data ranging from vegetables to electronics to astronomy to history.

Now, the robot is not only capable of sorting fruits but can also identify a wide range of objects and subjects. This is the core concept of Foundation models: they are trained on a vast scale of data from multiple domains and can apply their knowledge to a myriad of tasks and scenarios.

By this point, you might be wondering: Is the LLM so intelligent?

LLMs do not possess any cognitive capabilities like we humans do. However, thanks to their huge training data, they are good at predicting the next word. So, they predict the next word in a sentence, and with the addition of that word, it again does computations and predicts the next word, and so on. This is how it is able to write articles, converse with us like humans, and summarize texts.

This cool ability of LLMs comes not only from the vast amount of training data. It also stems from its Transformers architecture.

Do you remember the Transformers movie? If you haven’t seen it, don’t worry. It has nothing to do with all of this 🙂

Transformer architecture helps computers understand and generate human language. They’re really good at understanding the context of language—like how the meaning of a word can change depending on the words around it. They do this by giving more importance to the most relevant parts of the text they’re looking at. So, they’re really important in helping machines understand and generate text in a way that makes sense to us humans.

It is these LLMs (and other similar technologies) that have powered Generative AI.

Generative AI, as the name suggests, has the capability to generate new content. This is indeed a ground-breaking innovation, and we are just starting to see how it is being applied across businesses.

As mentioned in the beginning, AI is not new. It has been in action for many years now, in various forms. For example, when you are watching Netflix and it recommends you movies. That is AI. When you are shopping on Amazon and you see recommendations, that is again AI.

Ok, so the question is: what is Generative AI? To understand Generative AI, let's look at a scenario:

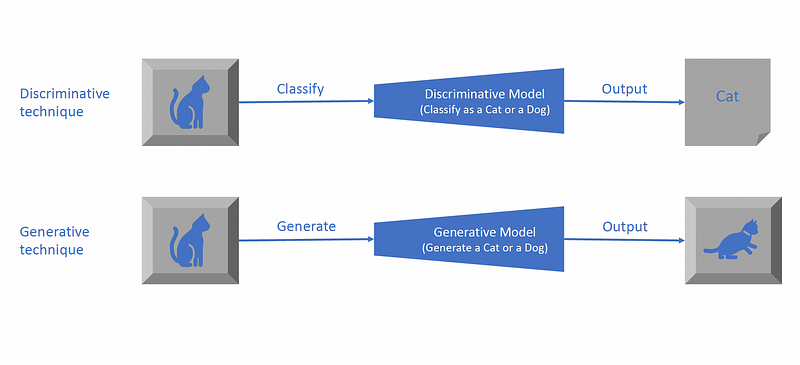

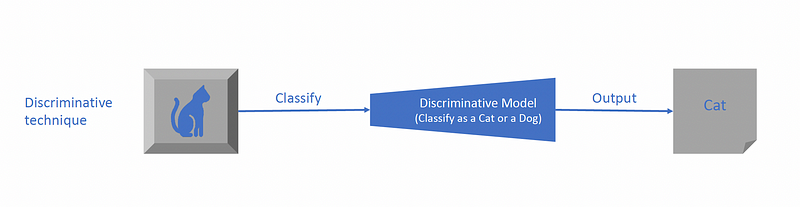

This is how traditional AI works: You have a model that is able to differentiate between a cat and a dog when shown pictures. You show a picture of a cat, and it classifies it as a cat.

Now, you have a Generative AI model that can generate content. You give a prompt, say a picture of a cat, and ask it to draw it. The model generates a picture of a cat. The resultant picture might be a totally different cat than the model has ever been trained on. So, basically, the model generates the cat from the prompt that you provided and the learning it already has on cats.

In conclusion, Generative AI represents a monumental leap forward in artificial intelligence. By not only recognizing patterns but also creating new content from them, this ground-breaking technology has the potential to revolutionize countless industries, from art and music to product design and beyond. As we continue to explore the possibilities of Generative AI, the future of technology appears more promising than ever before.

But our exploration doesn’t end here. In the coming weeks, I’ll delve deeper into the world of Generative AI, uncovering its applications, challenges, and implications for society. I invite you to join me on this journey and share your thoughts, questions, and ideas about the exciting frontier of Generative AI. Together, let’s unlock the full potential of this transformative technology and shape the future of artificial intelligence.

This story is published on Generative AI. Connect with us on LinkedIn and follow Zeniteq to stay in the loop with the latest AI stories. Let’s shape the future of AI together!